I grew up close enough to a large dam that we lived with its siren. Once a year, on the first Wednesday in February at 1:30 pm, they test it.

Everyone knew the rule: if it ever sounded outside the test, you did not pause to discuss it, check the news, or wait for the neighbor across the street to confirm. You went uphill, fast.

That is why you drill children on things like this: so the adult does not waste time thinking when thinking is already too slow.

On April 7th, the AI industry had one of those moments.

A company chose not to release a model because it was too useful at finding weaknesses. To me, that was the alarm sounding in the software industry — and, as far as I can read it, this is not a test.

The alarm has a name: Mythos.

What actually happened

Anthropic, the company behind Claude, introduced Project Glasswing, an initiative built around an unreleased model called Claude Mythos Preview, and then did something the AI industry almost never does: it withheld the model from broad public release. Anthropic says it is instead giving selected defenders a head start because the model has reached a level where it can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

The launch partners in Project Glasswing include AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks — in other words, organizations responsible for a meaningful share of the digital infrastructure the rest of us depend on.

Anthropic’s own technical write-up is striking. It says Mythos Preview found a now-patched 27-year-old OpenBSD bug, autonomously identified a 16-year-old FFmpeg vulnerability, and surfaced thousands of additional high- and critical-severity vulnerabilities that Anthropic says it is working to disclose responsibly.

Even if some of the surrounding public narrative turns out to be overstated, the underlying signal is hard to miss: frontier models are becoming powerful vulnerability-discovery engines faster than most defensive practices are adapting.

Mythos does not create that trend. It compresses the timeline.

Why this matters for asset owners

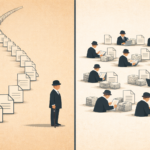

If you run a family office or oversee significant private wealth, you have always lived with a structural asymmetry: you may control the fortune of a regional bank, but operate with the staff of a small accounting firm.

That asymmetry is now colliding with a threat environment that is scaling faster than most organizations can adapt. In J.P. Morgan Private Bank’s 2026 Global Family Office Report, 32% of family offices cited cybersecurity as a top service need as offices digitize and aggregate more data. Deloitte reports that 43% of family offices experienced a cyberattack in the last 12 to 24 months, while 31% say they still do not have a cybersecurity strategy in place. A BlackCloak report cites recent studies showing that 83% of U.S. single-family offices rank cyber risk as a top concern.

Those concerns are well placed. In a widely reported Arup case, fraudsters used deepfake video and audio to impersonate senior colleagues on a call and trick an Arup employee into authorizing roughly $25 million in transfers. That case matters because it demonstrates, in plain language, that “I know the voice” and “I saw the face” are no longer reliable controls.

This is the real implication of Mythos for asset owners. It is not merely that AI can help defenders. It is that AI is improving the offensive toolkit as well, and lean organizations with concentrated assets, fragmented systems, and heavy reliance on third parties can become very attractive targets. That is especially true when decision-making is centralized, staff are trusted, and workflows still rely on recognition, habit, and speed.

Five categories of exposure

1. Third-party concentration

Family offices depend heavily on external providers: investment managers, custodians, administrators, reporting platforms, legal advisers, tax professionals, and technology vendors.

That means the real attack surface is often made up of other people’s systems. The leaner an office is run, the more likely it is that a compromise with a service provider can cascade into the office itself. Project Glasswing exists precisely because software vulnerabilities in widely used systems can become systemic very quickly once discovery and exploitation accelerate.

2. AI-enabled impersonation

Synthetic voice and video are no longer curiosities. They are operational attack tools.

Any approval workflow that relies on recognizing a familiar voice, face, or writing style should now be treated as bypassable. The Arup case is the clearest public example, but the principle is broader: once authentication becomes “does this seem like them?”, attackers have room to operate.

3. Shadow AI and data leakage

Sensitive material — trust structures, tax returns, family information, legal drafts, deal documents, and investment memos — is increasingly being handled with consumer AI tools outside formal governance.

Usually, this is not malicious. It is convenient. That is exactly why it is dangerous. J.P. Morgan’s report shows strong family-office interest in AI, while the broader family-office cyber literature shows that operational controls are still catching up. In practice, that combination often means enthusiasm arrives before policy does.

4. Patch lag and legacy exposure

One of the most unsettling parts of the Mythos story is not that the model found bugs. It is the age of some of the bugs it found.

Anthropic says Mythos identified a 27-year-old OpenBSD bug and a 16-year-old FFmpeg vulnerability, along with many other serious findings. The lesson for asset owners is not that their office suddenly needs frontier-model expertise. It is that many organizations systematically overestimate the health of the systems they rely on. Legacy exposure and patch lag persist because old software, vendor dependencies, and operational inertia persist.

5. Governance

Cyber risk is no longer just a technical problem. It is a governance problem.

Deloitte’s findings are telling: many family offices have experienced attacks, yet a meaningful share still lack a cybersecurity strategy. In a family-office context, that usually means the issue has not yet been elevated to principal-, board-, or trustee-level ownership. When that happens, cybersecurity gets treated as an IT expense rather than a control system for protecting assets, privacy, continuity, and reputation.

A practical action framework

Material progress does not require building an in-house security operation. It requires discipline across a small number of areas.

1. Inventory critical systems and dependencies

Document every system and provider that touches money movement, identity, custody, or sensitive data.

Most offices discover, once they do this properly, that they rely on more critical identity systems than they can easily name: email, custodians, reporting portals, administrator logins, document-sharing systems, personal devices, and often the accounts of key staff or family members.

Ask each strategic vendor, in writing, how they are addressing AI-augmented threats, vulnerability disclosure, and incident response. The quality and specificity of the answer is itself a signal. The existence of Project Glasswing is a reminder that the security of common software dependencies is now moving to the center of the risk discussion.

2. Strengthen approval and verification controls

Any change to wiring instructions or release of funds should require dual authorization and out-of-band verification.

Critically, verification should use a contact method sourced independently — not from the request itself. Email alone is not enough. Voice or video alone is no longer enough, either. A short call to a known number or a callback via a pre-established verification channel remains one of the most effective controls against deepfake-enabled fraud.

3. Raise the identity-assurance baseline

Multi-factor authentication should be mandatory across all critical systems.

Where possible, move beyond SMS-based factors. Phishing-resistant hardware security keys provide materially stronger protection, especially for principals, finance staff, and administrative accounts. If attackers increasingly target identity, the control surface must shift toward stronger proof-of-identity.

4. Put AI use under formal policy

Explicitly define which information may not be entered into public AI tools.

At the same time, provide a sanctioned alternative. Prohibitions without a usable workflow tend to fail quietly. J.P. Morgan’s report suggests interest in AI is already widespread among family offices; the operational question is whether that adoption is being channeled through policy, access controls, and approved tools, or through improvisation.

5. Maintain disciplined patching and tested recovery

Establish a regular cadence for patch review, with priority given to actively exploited vulnerabilities and critical vendor dependencies.

Just as important, test backups and recovery procedures under realistic conditions. Old vulnerabilities persist because patching is hard, but recovery often fails because it was never truly rehearsed. If you only discover broken backups during a crisis, you do not have backups; you have assumptions.

6. Run scenario exercises at the principal level

At least twice a year, run a tabletop exercise that includes the people who actually make decisions.

One scenario should involve a breach by a third-party provider. Another should involve an AI-enabled fraudulent payment request using synthetic voice or video. The point is not technical perfection. The point is to ensure escalation paths, decision rights, and communication work when time matters. The governance gap in family offices is often less about tools than about clarity.

The siren on the hill

The Mythos announcement is the alarm sounding, and this one does not look like a February drill.

The right response is not panic. But it is also not a meeting next quarter.

It is movement.

Higher ground, in practice, is not complicated: dual approvals, second channels, hardware keys, a clear map of dependencies, tighter AI-use rules, and direct conversations with the people who run the systems you rely on.

The point of a siren is not to prove the flood. It is to remove the need to wait for it.

Disclaimer

This post reflects my personal views and is provided for general information only. It is not investment, legal, tax, or cybersecurity advice. Please consult appropriately qualified professionals for advice on your specific circumstances.