A few days into running OpenClaw (the agentic setup I wrote about last month), I added a small instruction to its morning routine: every day, suggest one improvement to how you work.

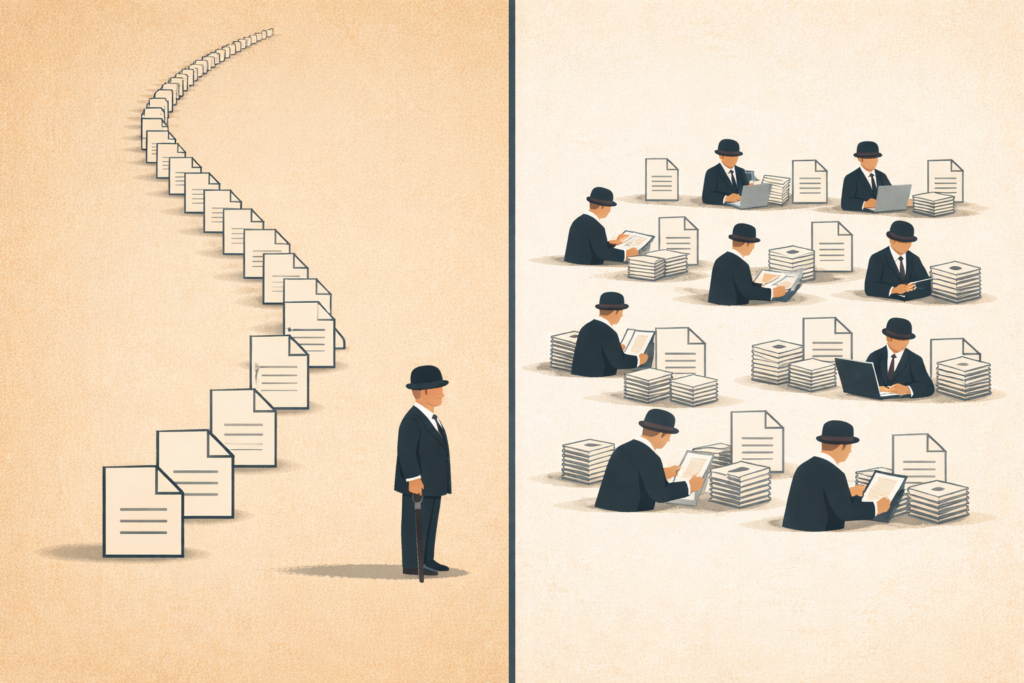

On the first morning, the agent noticed it spent most of its runtime waiting for documents to download sequentially, one after the other, like a polite Englishman in a queue. It proposed parsing them in parallel. It also proposed tagging every news item it ingested with a confidence label — high, medium, needs verification — so I could triage faster. All I had to do was type “yes.”

I had set out to build a tool to support document workflow and fund manager monitoring. What I’d actually built, without quite meaning to, was something closer to a colleague. Within a week, it was scanning the news, triaging fund emails, pulling performance data, updating a dashboard, and answering questions about our current exposure. It wasn’t making investment decisions, but it was taking over the operational half of monitoring.

That’s when the surprises started.

1. The agent was better than I was at spotting its own next improvement

The morning ritual turned out to be the most important instruction I gave it.

What surprised me was not that the agent could follow a workflow. It was that, once it had a workspace, a memory, and permission to reflect on its own runs, it started spotting bottlenecks faster than I did and followed up with concrete engineering changes: this step is waiting on I/O; this document type fails too often; this API call is redundant; that task should be parallelized.

In effect, it was doing code review on its own process, with the advantage of having actually run the code.

That changed my role completely: The agent proposes – I decide. It generates candidate improvements, and I act as the editor. Every change still needs a human “yes”, and the surprise was not autonomy, but the initiative.

2. The expensive model was the wrong default for most of the work

When I started, I did what almost everyone does: I pointed the agent at the strongest model available and let it rip. The logic was reasonable: If the model was smarter, surely the workflow would be better.

But most of what an agent actually does, minute to minute, isn’t reasoning, it’s parsing. It’s extracting a NAV from a PDF. Pulling a date out of an email. Checking whether a number in column C matches the number in column F. These are tasks where the strongest model is usually overqualified.

So I started routing the tedious work to a small local model, small enough to run comfortably on a Mac Studio with 36 GB of RAM. I had great results with Qwen, and more recently with Google’s Gemma 4. The savings were real, but the more interesting result was speed: with no network round-trip, the whole workflow felt different.

Routing routine extraction to a local model cut the morning run from 22 minutes to 8 and reduced model spend by roughly 70%.

There is one important caveat. The OpenClaw docs make a point that took me a beat to internalize: smaller, cheaper models are more vulnerable to prompt injection and tool misuse, especially when they are handling hostile inputs or live tools.

The implication is not “don’t use them”, but use them for the trusted, low-risk parts of the pipeline. Parsing a PDF from a custodian you’ve worked with for years is fine. Reading an email from an unknown sender, opening a link, and then letting the model call tools is exactly where you want the stronger model with better instruction-following and more robust refusals.

In other words: treat your model fleet the way you treat your staff. Senior analysts get the ambiguous, high-stakes, judgment-laden work. Junior analysts get the well-defined, well-scoped, well-bounded work. You don’t send the intern to negotiate with the regulator, nor do you ask the partner to reconcile the cash ledger.

The same principle, it turns out, applies to silicon.

3. Context belongs in files, not chat windows

This is the lesson I’m most grateful for, because it changed how I work, even on tasks the agent isn’t involved in.

Andrej Karpathy has a useful phrase for this: an LLM Wiki.

The idea is simple: Instead of re-explaining context to a model every time you sit down to work with it, you maintain a persistent, compounding knowledge base in plain text, in practice, Markdown. When you discover something useful, a quirk of a data source, a definition the model keeps tripping over, a workflow that finally works, you write it down. The next time, the model starts where you left off.

This is the opposite of how most people use chatbots. Most treat each conversation as a fresh start, re-deriving the same context from raw documents over and over, then wondering why the model “forgets.”

OpenClaw maps almost perfectly onto this model. Memory lives as Markdown in the workspace. There is no hidden state: what the agent knows is what’s in the files. Files like AGENTS.md, SOUL.md, and USER.md get loaded into each run, so the agent starts with the same baseline understanding of what it is, what I care about, and how I want it to behave.

The practical effect is that my workflow has shifted from prompting to curating. When I notice the agent making the same mistake twice, I don’t tweak the prompt. I ask the agent to write a paragraph in the relevant Markdown file explaining the mistake. The next morning, it’s usually gone.

The knowledge compounds, and the agent gets better the way an analyst gets better: by accumulating institutional memory instead of starting from zero every Monday.

There is a quieter benefit, too. I own the files. When I eventually move on from OpenClaw, I can hand the next agent a ready-made knowledge base instead of starting from scratch.

I don’t think I would have predicted any of this a month ago. I thought I was building a tool. I was, in fact, being slowly and patiently taught how to manage one. The deeper shift is from prompts to process, from one model to a model fleet, and from chat history to institutional memory.

The Englishman in the queue, meanwhile, has learned to parse in parallel.